Projects

RELIC

Background

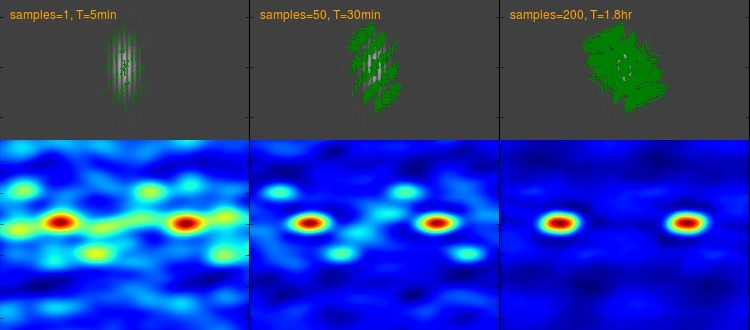

Successive output from APSYNSIM interferometry simulation showing improving

image reconstruction (bottom) as baseline coverage increases (top).

Successive output from APSYNSIM interferometry simulation showing improving

image reconstruction (bottom) as baseline coverage increases (top).

Terrestrial radio telescope arrays have been constructed that span tens of kilometers with dozens of antennas, and international collaborations have joined a handful of individual telescopes together to span multiple continents, with baselines up to ~8,000km. In order to allow capturing the best selection of baselines for different targets throughout the sky, reconfigurable arrays have telescopes mounted on mobile platforms that are repositioned between observations. In addition, the rotation of the Earth helps to sweep a ground-based array through a range of aspect angles with respect to fixed targets in the sky, providing additional baseline variety even within a single long-duration observation. The choice of telescope layouts for terrestrial interferometers has been carefully studied, yielding recommendations of special irregular patterns that depend on the target's celestial position and shape. This helps avoid duplicate distances and angles in the baselines between telescopes pairs that might occur with a more regular layout, and which do not contribute much to the array's imaging capability.

Radio-bright jets of the Hercules A galaxy.

NASA, ESA, S. Baum and C. O'Dea (RIT), R. Perley and W. Cotton (NRAO/AUI/NSF), and the Hubble Heritage Team (STScI/AURA)

Radio-bright jets of the Hercules A galaxy.

NASA, ESA, S. Baum and C. O'Dea (RIT), R. Perley and W. Cotton (NRAO/AUI/NSF), and the Hubble Heritage Team (STScI/AURA)

A constellation of small spacecraft, each equipped with a radio-frequency

sensor, can leverage interferometry to combine their individually modest

detection capabilities into a synthetic aperture instrument with much greater

resolving power.

A space-based array could access baseline distances that are unachievable on

the Earth's surface, and could do so from a privileged vantage above the

obscuring effects of the atmosphere, terrestrial noise sources, and the

horizon.

Furthermore, the relative orbital motions of the spacecraft would continuously

change the constellation geometry and thus sample a large variety of baseline

distances and angles.

Problem

While ground-based interferometer design has been well studied, space-based interferometer constellations introduce many more additional degrees of freedom. The mission design for such a constellation must balance among many competing variables: science quality, number of craft, total launch mass, data storage, communication topology, fuel costs, target coverage, mission operability, fault tolerance, etc. In particular, the details of the orbital geometry selected for each member craft directly impact the detection capabilities of the whole constellation, as well as the later data communication loads. Even more, the geometry of the constellation may be modified during the mission by expending maneuvering propellent to boost individual spacecraft to different orbits, further magnifying the range of possible mission scenarios. The problem faced is how to select high quality spacecraft orbits and other constellation features within such a vast mission design space so as to focus further human attention on only the most promising possibilities.Impact

Automated analysis and trade space exploration has the potential to significantly improve the mission design process by focusing human creativity on the highest quality solutions. This is particularly true for interferometry missions where there are an even higher number of design variables under consideration. In addition, effective modeling of future mission operability is becoming more important as mission data volumes increase amid constrained communication resources.Status

The RELIC mission study utilized the automated operability modeling to assist in communication hardware selection and data management analysis in 2016. The study then leveraged the automated orbit parameter optimization techniques to narrow down candidate constellation configurations in 2017.Description

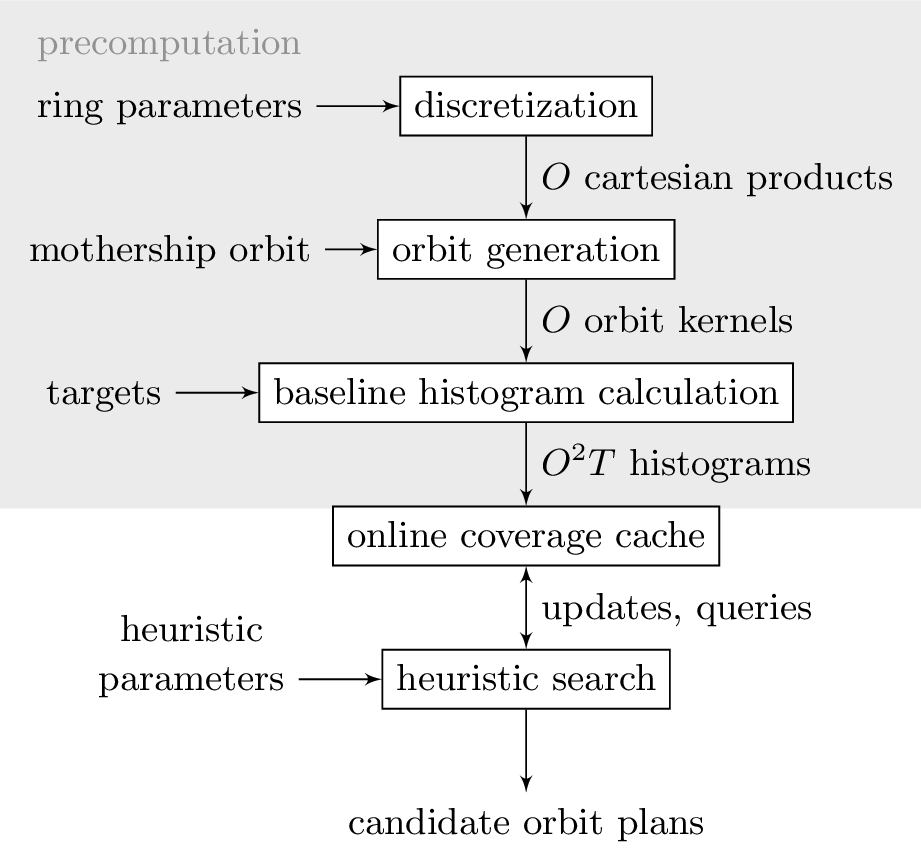

Process data flow, showing significant offline precomputation to speed

heuristic optimization among possible orbit selections.

Process data flow, showing significant offline precomputation to speed

heuristic optimization among possible orbit selections.

The first step involves significant pre-computation of the geometric relationships of the spacecraft constellation and potential targets in order to accelerate later search steps. A set of provided ranges for constellation ring parameters such as number of spacecraft, orbital inclination, total fuel mass, etc is first discretized to a reasonable density for search. For example, the relative inclinations of the daughter-craft rings may range from 0 to 90 degrees above the mother-ship's orbital plane in increments of 10 degrees. Each term of the cartesian product of discretized constellation parameters are used to generate a set of coherent constellation orbits, in the form of SPICE kernels. The SPICE kernels are used to calculate the projective baselines between all pairs of orbits with respect to a set of evaluation targets for each candidate constellation. These baselines are then loaded into an efficient histogram baseline-coverage cache that efficiently answers future queries about the contribution of each orbit to different constellation configurations. This cache forms the basis of the baseline-coverage heuristic that guides future search steps.

Subsequently, the ideal constellation for a given set of constraints is "grown" via heuristic-guided iterative search. Several separate search strategies were evaluated, including hybrid combinations of such strategies. Forward-Greedy : score each possible next orbit based on how much it improves the current constellation if added, and then add the single maximum-scoring orbit as a new member of the constellation Reverse-Greedy : score each current orbit based on how much the constellation suffers if it is removed, and then remove the single least-damaging orbit from the constellation Accordion-Greedy : alternate between forward and reverse greedy phases of search with a specified cadence, eventually arriving at a target constellation size Risk-Aware : additionally accounts for a given probability of spacecraft loss during the mission by sampling across possible loss scenarios when scoring orbit contributions Fuel-Aware : accounts for the propellant mass expended in order to achieve different spacecraft orbits (e.g. higher inclination rings require much more fuel) by weighting alongside baseline coverage Throughout the search process, the baseline-coverage cache is kept updated to allow fast access to scoring queries relevant to the current constellation.

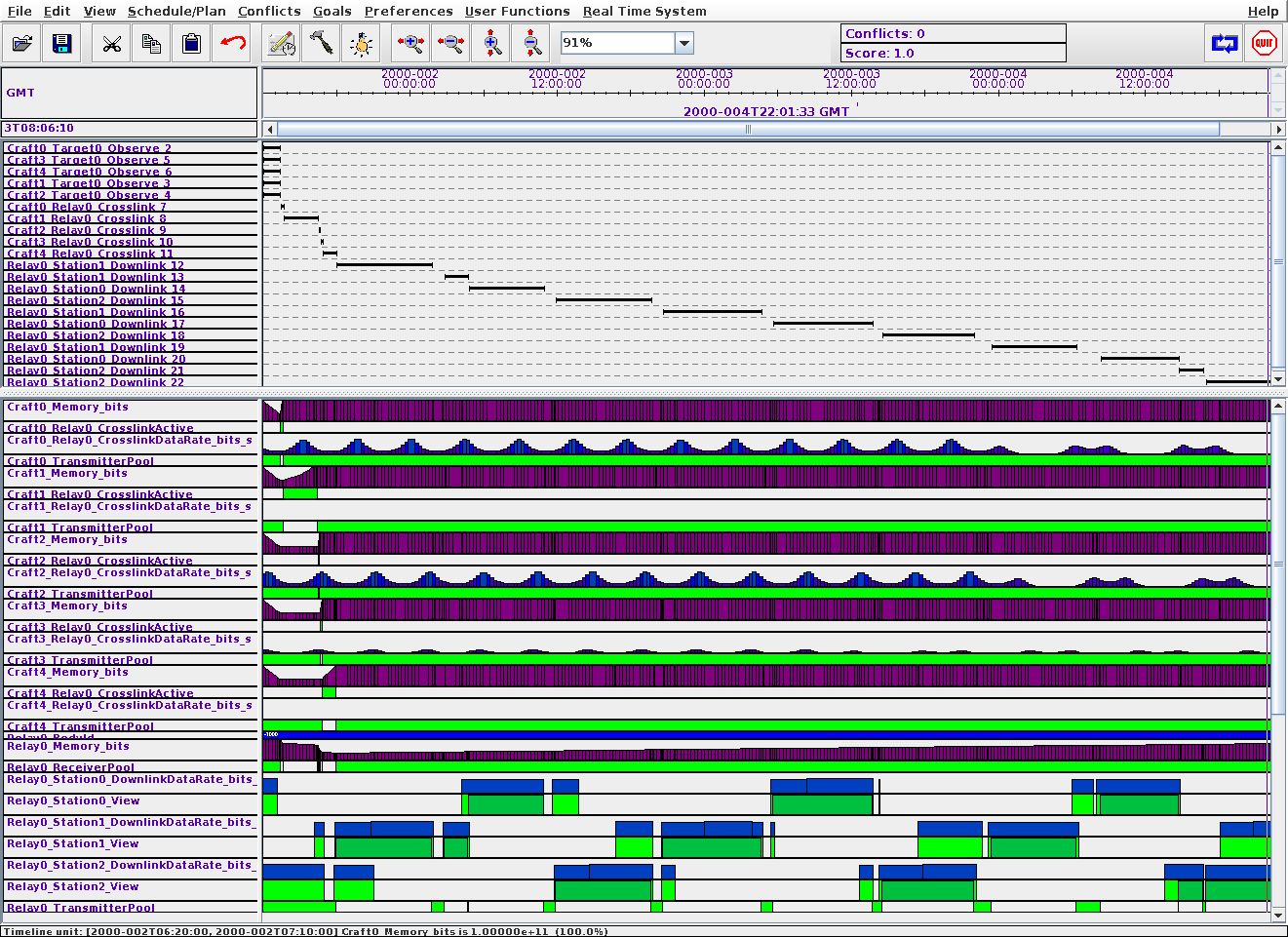

Screenshot of ASPEN data communication plan for a small constellation,

showing initial observation period, variable data-rate relay cross-link to

mothership, and final downlink to earth ground stations.

Screenshot of ASPEN data communication plan for a small constellation,

showing initial observation period, variable data-rate relay cross-link to

mothership, and final downlink to earth ground stations.

Different parameters of the constellation beyond geometry are also evaluated

during this operations simulation step, including relative sizing of

communication equipment on the mothership versus daughter-ships.

A final reporting is made of the required mission lifetime and consumable

resources necessary to support a given observation campaign using each

candidate constellation, as well as final image quality measures that might be

expected.

Human mission designers can then use the output of the automated analysis to

help trade capabilities versus equipment costs, operational costs, launch

costs, etc.